AI Act Gap Analysis & Response Plan

Strengthen AI governance and align with the EU AI Act and ISO standards

Clarity on risks. Actionable compliance steps. Stronger trust in AI

Minimise AI-related risks through a structured AI Management System.

Align with legal, ethical, and forward-looking regulatory standards

Distinguish yourself as a responsible and credible AI-driven organisation

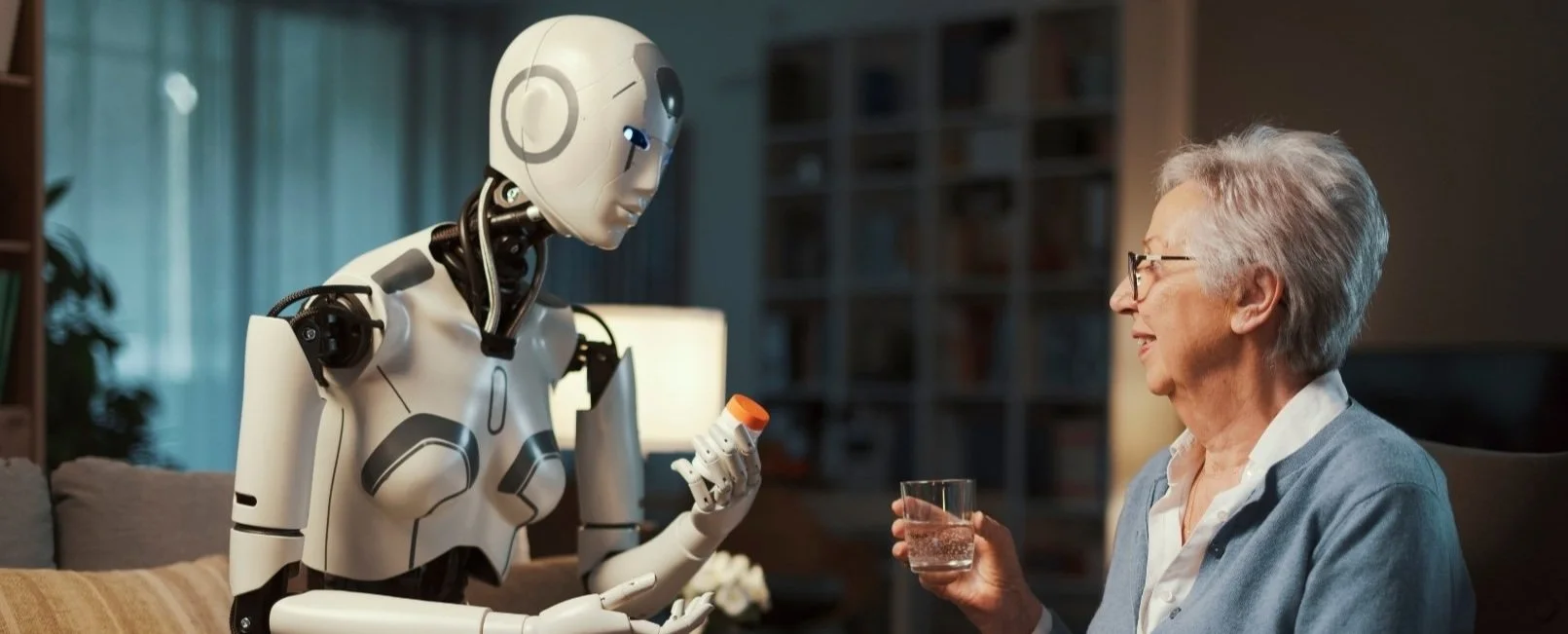

A Framework for Responsible AI

An AI Gap Analysis gives organisations a clear view of how their current practices align with requirements for governance, accountability, and data protection. It examines policies, processes, and oversight to identify gaps that may undermine compliance or ethical use. The outcome is a practical response plan that supports the responsible development and deployment of AI systems, ensuring they are transparent, fair, and aligned with both regulatory and stakeholder expectations.

Our Approach

Assess

Define scope, review policies, governance and data handling practices.

Analyse

Benchmark against legal requirements and best practices, identify gaps and prioritise risks.

Plan

Provide a comprehensive assessment with clear remediation steps, tailored to your risk profile.

Support

Ongoing advisory support helps implement recommendations, train staff and adapt to regulatory or business changes.

The Result: Trusted AI Governance in Practice

Support for EU AI Act Compliance

Structured oversight and documentation that strengthen your organisation’s ability to demonstrate compliance with the EU AI Act.

Support with conformity assessments, documentation, and reviews to ensure AI systems are transparent, defensible, and aligned with regulatory standard

Independent AI Oversight

Competitive Advantage in Procurement

Clear evidence of maturity and foresight in AI governance that strengthens credibility in contracts and partnerships.

Frequently Asked Questions

-

An organisation should conduct an AI governance analysis when determining whether a system qualifies as an AI system under Art. 3(1), and whether it falls into the high-risk category.

In certain cases, providers may justify that an Annex III system is not high-risk, provided this assessment is documented prior to deployment and aligned with Art. 6(3) and Art. 49(2).

-

Organisations must assess and demonstrate compliance with requirements under Art. 8–15, including:

• Risk management

• Data governance

• Technical documentation

• Transparency

• Human oversight

• Accuracy and cybersecurityIn addition, providers and deployers must meet their respective obligations, including documentation, monitoring, and, where applicable, fundamental rights impact assessments under Art. 27.

-

Yes. An AI governance analysis helps structure the documentation and controls required to demonstrate compliance with the EU AI Act.

It supports:

• Preparation of technical documentation under Annex IV

• Implementation of quality management systems

• Readiness for conformity assessment procedures under Art. 43These elements are essential for verifying that the system meets the requirements for high-risk AI systems before market placement or use.

Primary reference: Art. 43

Secondary references: Art. 8, Art. 11, Art. 16–17, Annex IV -

Risk management is central to AI governance and is a mandatory requirement for high-risk AI systems.

It must be:

• Continuous and iterative

• Applied across the entire lifecycle

• Regularly reviewed and updatedThe process includes:

• Identifying and assessing risks

• Evaluating risks under intended use and misuse

• Monitoring risks post-deployment

• Implementing mitigation measuresRisk management underpins compliance with all other requirements in Chapter III.

-

An AI governance analysis should be updated whenever there are changes affecting the system’s risk profile or compliance status.

This includes:

• Updates to the risk management system

• Changes in technical documentation

• Substantial modifications requiring a new conformity assessment under Art. 43(4)

• Updates to fundamental rights impact assessments where applicableThe process is continuous and should evolve with the system lifecycle.